Why You Should Worry If an AI Marks Your University Assignments

I remember a time last month when a very bright student came to me for some advice. He had spent three whole weeks on his final research paper. He poured his heart into the work, and he made sure that every argument was perfect. However, when his paper came back from his lecturer, the feedback was very strange.

The comments lacked any human warmth, and the remarks simply flagged his unique voice as having “awkward phrasing.” I realised right away that a machine, and not a human, had marked his hard work.

He felt completely cheated. If you are a university student right now, you might be facing this exact same problem without even knowing it. Today, we need to talk about the hidden dangers of lecturers who use AI to mark your papers.

The Unfair Double Standard

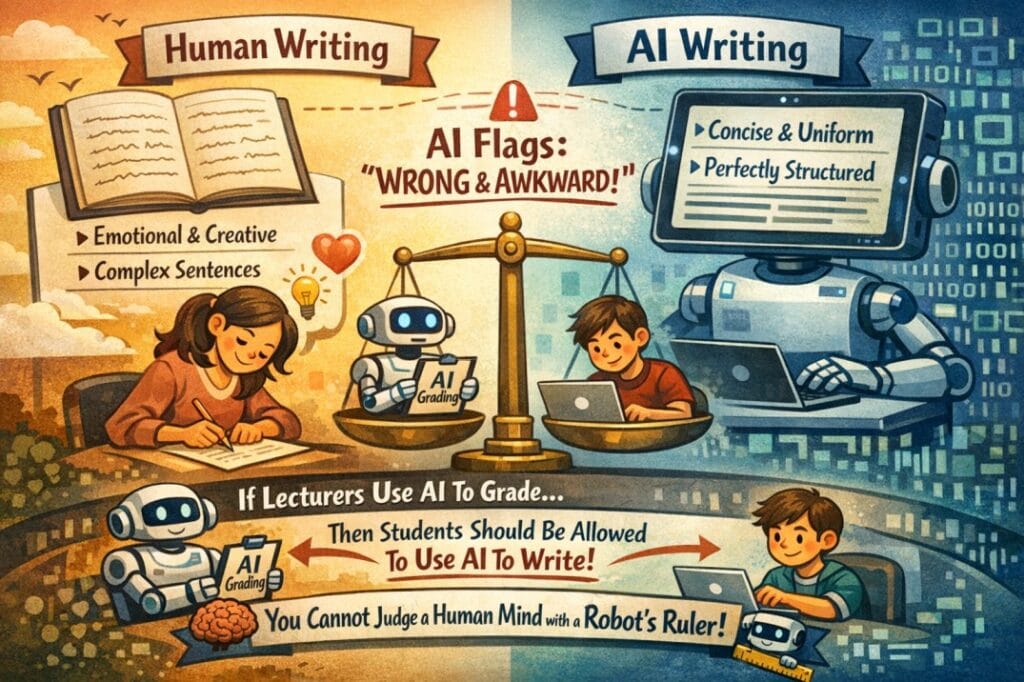

Right now, we see a very strange and unfair situation in our universities. Lecturers are constantly telling students that they must never use AI to write their assignments. They quickly label it as cheating.

However, these same lecturers use AI to mark those exact papers. We believe that this behaviour is simply not ethical. If you put your time, your energy, and your money into a university degree, you deserve a human reader who respects your effort.

It is not right that a student spends weeks on a paper, only for a lecturer to spend five seconds running it through a machine.

Why Human Writing Fails the AI Test

We must understand that human writings will never look exactly like ChatGPT writings. As humans, we naturally write with emotion, and we use complex sentences to express our thoughts.

But AI has been trained to be extremely concise and perfectly uniform. When an AI tool reads a normal human essay, it will usually flag the text as wrong or awkward because it does not understand human creativity.

If lecturers insist that they want to use AI to mark your work, then it is only logical that they should allow students to use AI to write the papers entirely. This is the only way that the system can be fair. You cannot judge a human mind with a robot’s ruler.

The Lack of a Universal Standard

There is another massive danger that nobody wants to talk about. We currently have absolutely no universal standard for how universities use these tools.

Different lecturers use completely different AI programs. These programs are trained differently, and they have completely different capabilities. For example, your friend might get a high mark because her lecturer uses a very advanced AI, while you might get a low mark because your lecturer uses a basic, free tool that makes mistakes.

This means that your final grade depends more on the software that the lecturer chose than on the quality of your actual research.

It Is Time for Uniformity

Deep down, many of these lecturers are hiding behind the veil of technology. They avoid the hard work that comes with reading long essays, and they let the machine take the blame when a student gets a bad grade.

This needs to stop. We desperately need uniformity and honesty across all universities. If institutions want to maintain any trust with their students, they must create clear, universal rules about how these tools are used.

Conclusion of Article

To conclude our discussion, we must face this unfair situation together because it is simply not right that universities punish you for the exact same tools that your lecturers use to mark your hard work.

Until we have a clear set of rules (refer to an example) that every single university follows, we will always see grades that depend on a machine instead of your actual knowledge.

We need to demand a system that treats your human effort with the respect that it truly deserves. If institutions want to rely on a robot to read the papers that you submit, then it is only fair that they allow you to use a robot to write them in the first place.

Click here to learn how to remove AI text from your work.

Pingback: How to Write the First Sentence of Your Research Paper